Lab 4: Added Variable Plots and Bootstrapping

PSYC 7804 - Regression with Lab

Spring 2026

Today’s Packages and Data 🤗

No new packages for today!

Partial regression coefficients

In Lab 3 , we used Corruption and Freedom to predict Happiness_score. We observed that the results were very different when we use both variables together compared to when we look at them separately

The catch is that the model that includes both predictors calculates partial regression coefficients.

Partial regression coefficients “By Hand”

Personally, the concept of partial regression coefficients starts making sense once I “compute” them myself and see what is going on behind the scenes. The residuals are once again very important here!

So, when you run multiple regression, all your slopes are calculated based on the residuals after accounting for all the other variables in the model.

Added variable plots

Then, added variables plots are simply plots between the residuals of one the predictors and \(Y\) that result by removing the variance explained by all the other variables.

The Quick way: car::avPlots()

To get added variable plots in practice you would use the avPlot() function.

When you have more than 2 predictors, it is not possible to build a visualization of all variables at once like a 3D plot. Added variable plots can help you visualize regression results no matter the number of predictors!

Another Look at the 3D plane

You can see that the avPlots() function identifies 4 point per plot: 2 points with the highest residuals, and 2 points with the highest leverage

Bootstrapping

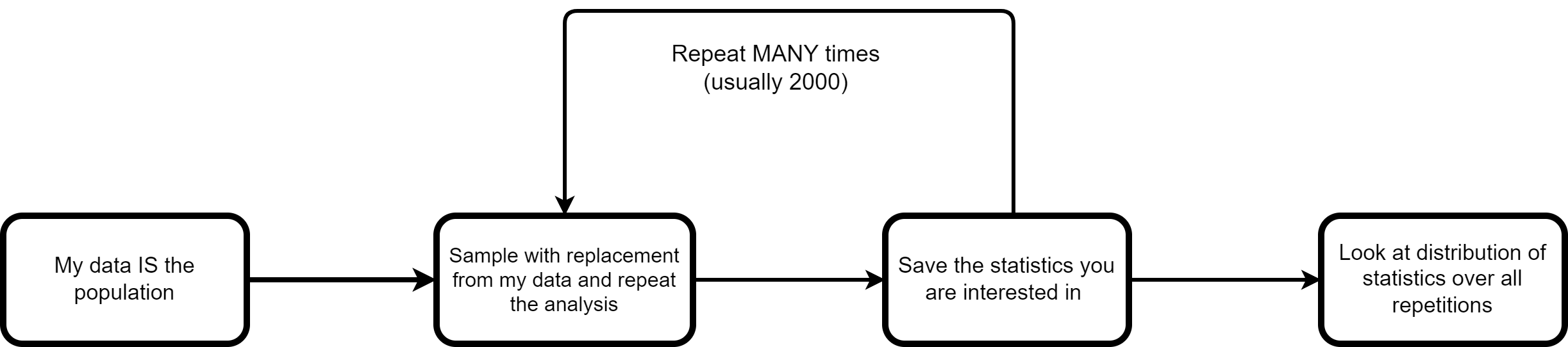

Bootstrapping (Efron, 1992) is a really smart idea to calculate confidence intervals while avoid doing math! You probably have seen some complicated equations that need to be derived to calculate confidence intervals for statistics (e.g., see here for the 95% CI of \(R^2\)).

Turns out that even more complex math goes behind deriving the complicated equations 😱 Usually, that involves figuring out what is the theoretical sampling distribution of a certain statistic for an infinite number of experiments.

Instead bootstrapping says:

“By Hand” Example of Bootstrapping

As always, we can do things ourselves to get a better understanding of the process.

Bootstrap \(R^2\) code

# empty element to save R^2 to

r_squared <- c()

reg_vars <- dat[, c("Happiness_score",

"Corruption",

"Freedom")]

set.seed(34677)

for(i in 1:2000){

# sample from data

sample <- sample(1:nrow(reg_vars),

replace = TRUE)

dat_boot <- reg_vars[sample,]

# Run regression

reg_boot <- lm(Happiness_score ~ Corruption + Freedom,

dat_boot)

# Save R^2

r_squared[i] <- summary(reg_boot)$r.squared

}There is a lot of value in understanding how the code above works, but the main point is that we are running the same regression 2000 times with a different sample from our data, and then saving the \(R^2\) that we get every time.

We mostly care about the 95% confidence interval.

The Quick way: Boot() function from car

Once again, the car package comes to the rescue

Bootstrap percent confidence intervals

2.5 % 97.5 %

(Intercept) 3.969337 6.309630

Corruption -3.303885 -1.572729

Freedom 2.963881 5.004918The confidence interval for \(R^2\) is a bit annoying to get from car, so here we just have the CIs for intercept and slopes

Bootstrapping becomes more and more accurate as sample size increases. Here it may not be as accurate because we only have 140 observations, although it seems to do alright.

Visualizing Bootstrap Results

You can also visualize the distribution of the bootstrapped samples for the regression slopes.

summary() output through the parm= argument

References

PSYC 7804 - Lab 4: Added Variable Plots and Bootstrapping